Build Voice Agents.

From Scratch.

An 8-week live bootcamp taught by Dr. Sreedath Panat (MIT PhD). Build real-time voice agents from scratch using ASR, TTS, LLMs, and streaming pipelines.

Can't attend live? All sessions are recorded for lifetime access.

“The biggest untapped opportunity in AI is voice, and LLMs will unlock it at scale.”

Voice is the next

trillion-dollar interface.

Voice AI investment has grown 7x in two years. Three new unicorns emerged in early 2026. Gartner predicts $80B in contact center savings. The industry is just getting started.

Voice AI Market Size

AI Voice Generators — Global

Source: Grand View Research · Markets and Markets

Voice AI Unicorns & Funding

3 new unicorns in early 2026 alone

Sources: TechCrunch · Crunchbase

“By 2029, agentic AI will autonomously resolve 80% of common customer service issues without human intervention, leading to a 30% reduction in operational costs.”

The world's top leaders agree:

voice is the next interface.

From Meta to NVIDIA to a16z — the consensus is clear. Voice is becoming the most natural, primary way people will interact with AI.

“Voice is going to be a way more natural way of interacting with AI than text.”

Mark Zuckerberg

CEO, Meta

“Interacting with Gemini should feel conversational and intuitive — an in-depth conversation using your voice.”

Sundar Pichai

CEO, Google

“Conversational AI is the next web browser.”

Mustafa Suleyman

CEO, Microsoft AI

“Digital humans will revolutionize industries. Interacting with computers will become as natural as interacting with humans.”

Jensen Huang

CEO, NVIDIA

“For consumers, voice will be the first — and perhaps the primary — way people interact with AI.”

Olivia Moore

Partner, a16z

“Voice is the next interface for AI.”

Mati Staniszewski

CEO, ElevenLabs

Built for engineers who want to go deep.

- Engineers transitioning into voice AI, speech systems, or conversational AI engineering

- Developers building voice-powered products — receptionists, assistants, agents

- Engineers who want to go beyond using LLMs — to building real-time voice pipelines

- Researchers who need production engineering depth alongside theory

Leave production-ready.

Voice AI interview question:

“Design a real-time voice agent that handles tool calling, memory, and barge-in interruptions with sub-second latency. Walk me through the architecture.”

Asked at companies building voice AI products. You will have a complete answer.

- Build production-grade voice agents from scratch — ASR, LLM, TTS, tools, memory

- Design low-latency, real-time streaming voice pipelines with WebSockets

- Implement tool-calling, memory, and agentic workflows in voice systems

- Deploy voice agents locally, in the browser, and in the cloud

- Build industry-level portfolio projects from hands-on capstone work

The complete toolkit.

One voice agent bootcamp.

Go from zero to building production-grade, tool-using, real-time voice agents in Python.

Voice Agent Architecture

Understand the full pipeline: audio input, ASR, LLM reasoning, tool calling, TTS, and audio output.

Speech-to-Text Pipelines

Build transcription systems with Whisper, faster-whisper, and voice activity detection.

Text-to-Speech Systems

Generate natural voice responses using Piper, Coqui TTS, and modern synthesis engines.

LLM Reasoning Layer

Connect language models as the brain. Design prompts for spoken, concise, interruption-friendly replies.

Tool Use & Memory

Give your voice agent real capabilities: search, schedule, calculate, remember, and take action.

Real-Time Streaming

Build low-latency streaming pipelines with WebSockets, incremental ASR, and barge-in handling.

Production Architecture

Design modular, scalable systems with fallbacks, logging, observability, and cost optimization.

End-to-End Projects

Build complete voice agents: AI receptionist, meeting assistant, research assistant, and more.

8 days of building.

One complete education.

Each day builds on the previous. By the end, you'll have built a complete real-time voice agent.

Voice Agents & System Architecture

Tuesday · 2 PM IST- What is a voice agent vs chatbot vs conversational AI

- Core architecture: Audio → ASR → LLM → TTS → Audio

- Batch vs streaming pipeline design

- Key challenges: latency, interruptions, turn-taking

- Overview of the voice agent ecosystem and frameworks

- Build a simple end-to-end voice interaction loop

Speech-to-Text (ASR) Foundations

Tuesday · 2 PM IST- How automatic speech recognition works

- Audio preprocessing, feature extraction, decoding

- Whisper, faster-whisper, Distil-Whisper deep dive

- Implement local transcription pipeline in Python

- Microphone recording and live transcription

- Voice Activity Detection (VAD) for speech pipelines

Text-to-Speech & Voice Output

Tuesday · 2 PM IST- How modern TTS systems work

- Trade-offs: latency, quality, controllability, cost

- Piper, Coqui TTS, ElevenLabs comparison

- Build a TTS pipeline in Python

- Real-time speech playback

- Local vs API-based TTS systems

LLMs as the Brain of a Voice Agent

Tuesday · 2 PM IST- Why ASR + TTS alone is not enough

- LLMs as the reasoning and decision-making layer

- Prompting for voice: concise, spoken-style responses

- Transcript → LLM → spoken response loop

- Conversation history and context management

- Designing voice agent personalities

Tool Use, Memory & Agentic Workflows

Tuesday · 2 PM IST- What makes a voice system an actual agent

- Tool calling: calculator, web search, scheduling, APIs

- Short-term vs long-term memory

- Context engineering: keep, compress, control latency

- Build a tool-using voice agent pipeline

- Example: voice assistant that searches and takes actions

Real-Time Streaming Voice Agents

Tuesday · 2 PM IST- Turn-based vs real-time voice systems

- WebSockets, incremental ASR, partial transcripts

- Streaming TTS, endpointing, turn detection

- Barge-in and user interruption handling

- Build a real-time streaming voice loop

- Understanding production latency sources

Production-Grade Architecture

Tuesday · 2 PM IST- Designing a robust, modular voice agent system

- Frontend, backend, ASR/LLM/TTS services, memory layer

- Fallbacks, retries, and failure handling

- Cost optimization and model selection

- Safety, filtering, and guardrails

- Frameworks comparison: Python+WS vs Pipecat/LiveKit

Final Project: End-to-End Voice Agent

Tuesday · 2 PM IST- Build a complete voice agent from scratch

- Full pipeline: mic → VAD → ASR → LLM → tools → TTS

- Choose: receptionist, meeting, research, or desktop assistant

- Testing, debugging, and improving your system

- Deployment: local, browser-based, and cloud setups

- Extending into real-world products

The tools that power

production voice AI.

You won't just learn theory — you'll build with the same models and tools used in real voice agent systems.

Whisper

OpenAI ASR

faster-whisper

Optimized ASR

Distil-Whisper

Lightweight ASR

Piper TTS

Local Synthesis

Coqui TTS

Neural Voices

Silero VAD

Voice Detection

Claude / GPT

LLM Layer

WebSockets

Real-Time Infra

Python

Core Language

Build something

you can actually ship.

Day 8 culminates in a hands-on capstone project that ties together everything you've learned.

AI Receptionist

An intelligent phone receptionist that answers questions, routes calls, and handles bookings.

Meeting Assistant

A real-time voice assistant that joins meetings, takes notes, and extracts action items.

Research Assistant

A voice-driven research agent that searches, summarizes, and answers complex questions.

Desktop Assistant

A personal voice assistant that controls your desktop, launches apps, and automates tasks.

Scheduling Agent

A voice agent that manages calendars, sets reminders, and coordinates meetings.

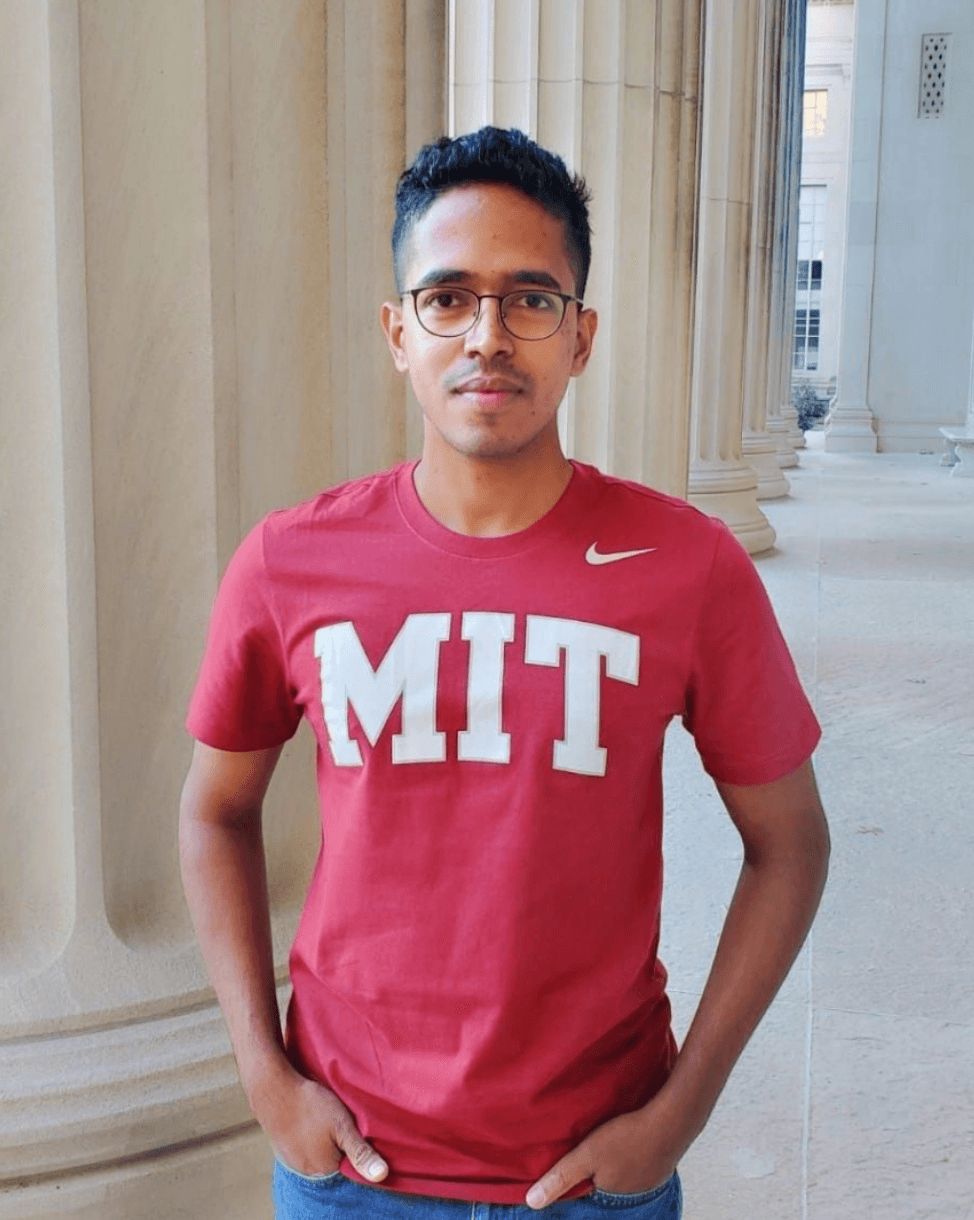

Dr. Sreedath Panat

MIT PhD · Vizuara AI Labs

Dr. Sreedath Panat

MIT PhD · Co-founder & Director, Vizuara AI Labs

Dr. Sreedath holds a PhD from MIT and is the co-founder and director of Vizuara AI Labs. An IIT Madras graduate and department gold medalist, he has built a 200K+ subscriber YouTube channel and co-authored the Manning bestseller “Build DeepSeek from Scratch”.

His teaching philosophy: visual intuition first, mathematical rigour second, hands-on implementation always. Every concept is taught from scratch — no hand-waving.

Have questions? Reach out at sreedath@vizuara.com

- All 8 core lectures personally delivered

- PhD from MIT — rigorous technical foundation

- IIT Madras graduate & department gold medalist

- Winner of the Langmuir Award

- Co-author of Manning bestseller "Build DeepSeek from Scratch"

- 200K+ YouTube subscribers · 115K+ LinkedIn followers

Start your research with a head start.

Don't start from scratch. Tell us your topic of interest and we'll generate a personalised research roadmap and an initial version of your research paper — delivered asynchronously, so you can hit the ground running from day one.

What's in the kit

Personalised Research Roadmap (PDF)

You tell us your topic of interest. We generate an 8-week structured plan with milestones, deliverables, and acceptance criteria — tailored to your specific voice AI research area. Includes literature review scope, audio data pipeline design, experiment matrix, and manuscript timeline. Delivered asynchronously.

Initial Research Paper Draft

We generate an initial version of your research paper — research questions framed, methodology outlined, related work surveyed, and experiment setup defined. You don't start with a blank page — you start with a 6–8 page scaffold ready to build on. Delivered asynchronously based on your topic.

Curated Paper Reading List

12–15 handpicked papers relevant to your topic with reading order, key takeaways, and connections between papers. Includes a literature matrix template for systematic tracking.

Starter Code Template

A clean, documented codebase scaffold for your voice AI research project — audio loading, training loop, evaluation pipeline, and experiment config. Ready to run on day one.

Example research topics

Your roadmap is personalised to your background and goals. Here are some voice AI topics our students have worked on:

Low-Latency Streaming ASR with Conformer Encoders for Voice Agents

Expressive Neural TTS with Emotion and Prosody Control

End-to-End Speech-to-Speech Translation with Nano-Scale Models

Real-Time Voice Activity Detection and Barge-In for Conversational Agents

Knowledge Distillation of Whisper for On-Device Speech Recognition

Voice Cloning Ethics and Watermarking for Generative Speech Models

Speaker Diarization for Multi-Party Voice Assistants

Retrieval-Augmented Voice Agents for Domain-Specific Dialog

Build your workshop

Select what you need. Everything adjusts instantly.

Step 1 — Choose your program

Step 2 — Or pick a bundle and save

What mentorship includes

Fully async — personalized feedback at every stage, no calls required. See our published research.

Target: Publishable Paper

The goal is a research paper. Your mentors guide you from topic selection through experiments to a publication-ready manuscript.

Every Step Guided

Literature review, experiment design, ablation studies, writing — your mentors walk you through every step of the research process so you never feel stuck.

Industry + Research Exposure

Get career strategy and deep research guidance. Both industry and academic perspectives in one mentorship.

Paper Reading Guidance

Curated reading lists, paper discussion, and feedback on how to extract and apply insights from the literature.

Actionable Next Steps

Every interaction ends with clear deliverables and deadlines. You always know exactly what to do next.

Ready to build your

voice agent?

Join the 8-day bootcamp and go from zero to building real-time, production-grade voice agents from scratch.

Starts May 12, 2026 · Every Tuesday 2 PM IST · 100% Hands-On